Overview

Developed an AI-powered automatic assessment system for students' English presentations. Deployed a real-time inference system on Azure ML, achieving 30,000 monthly active users (MAU).

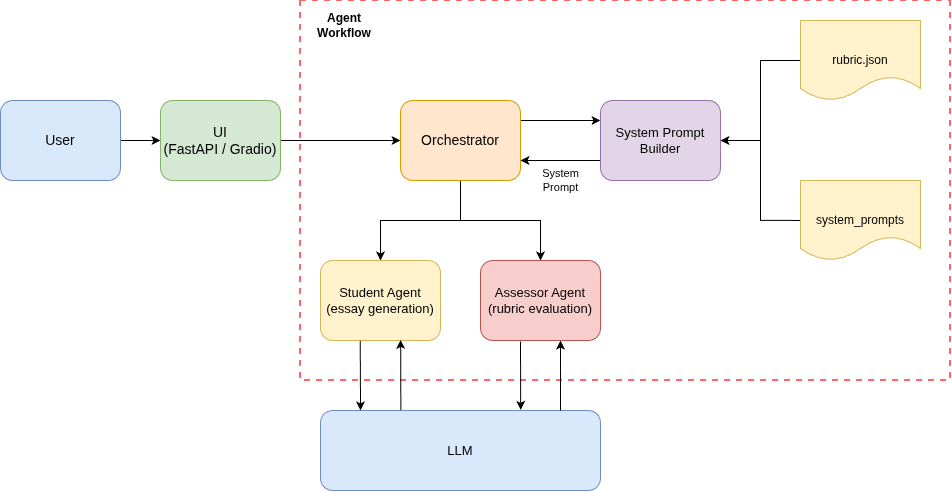

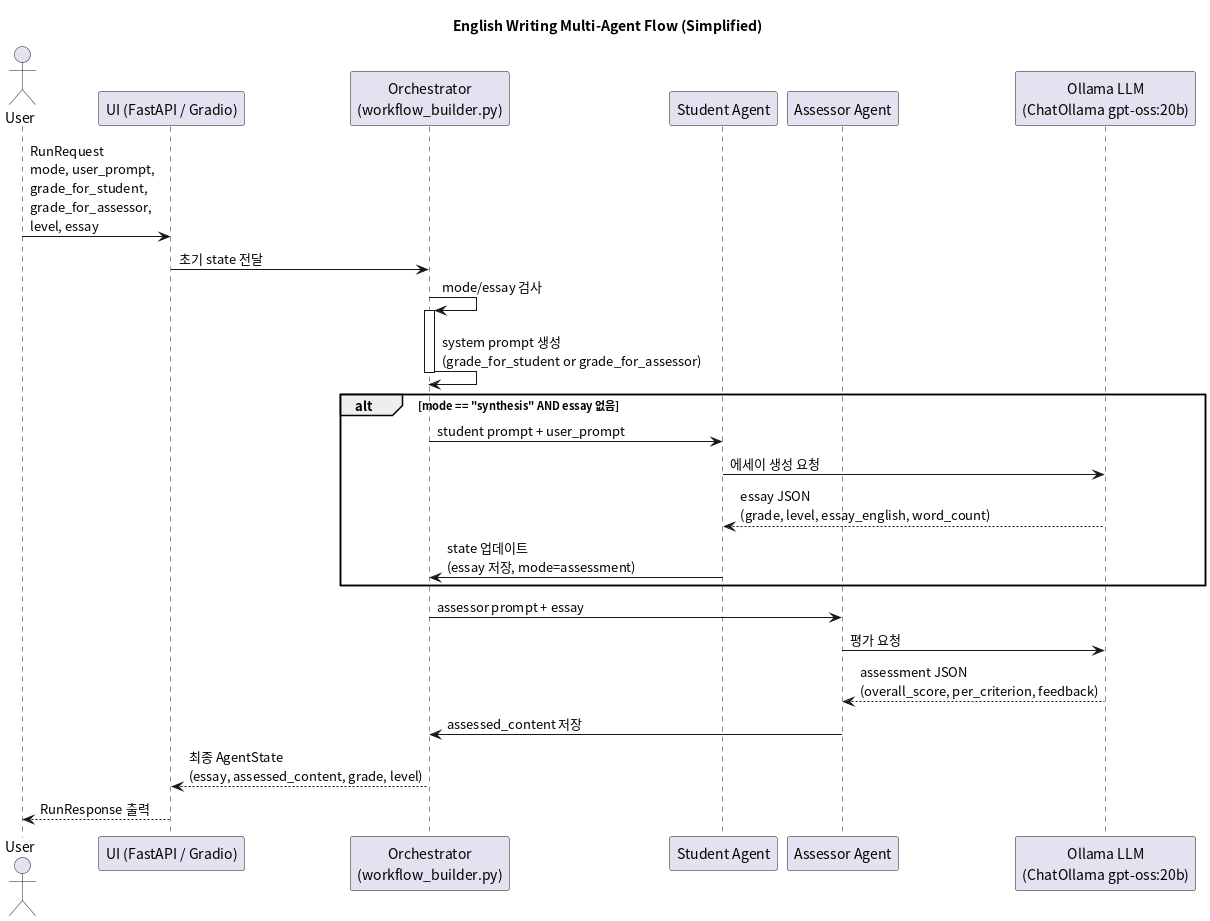

Architecture

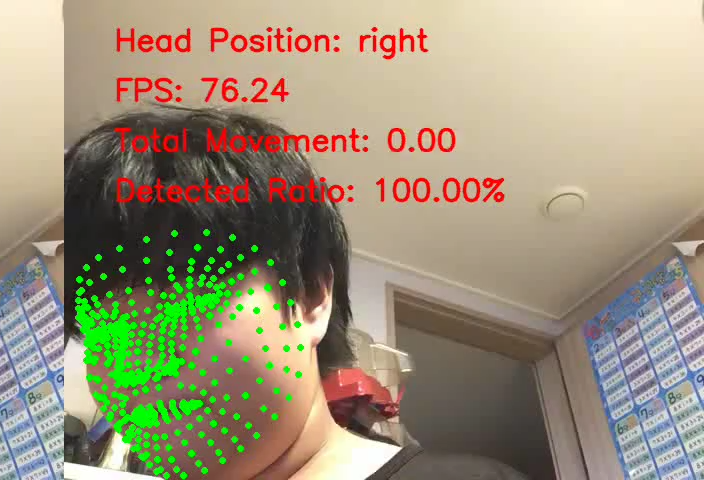

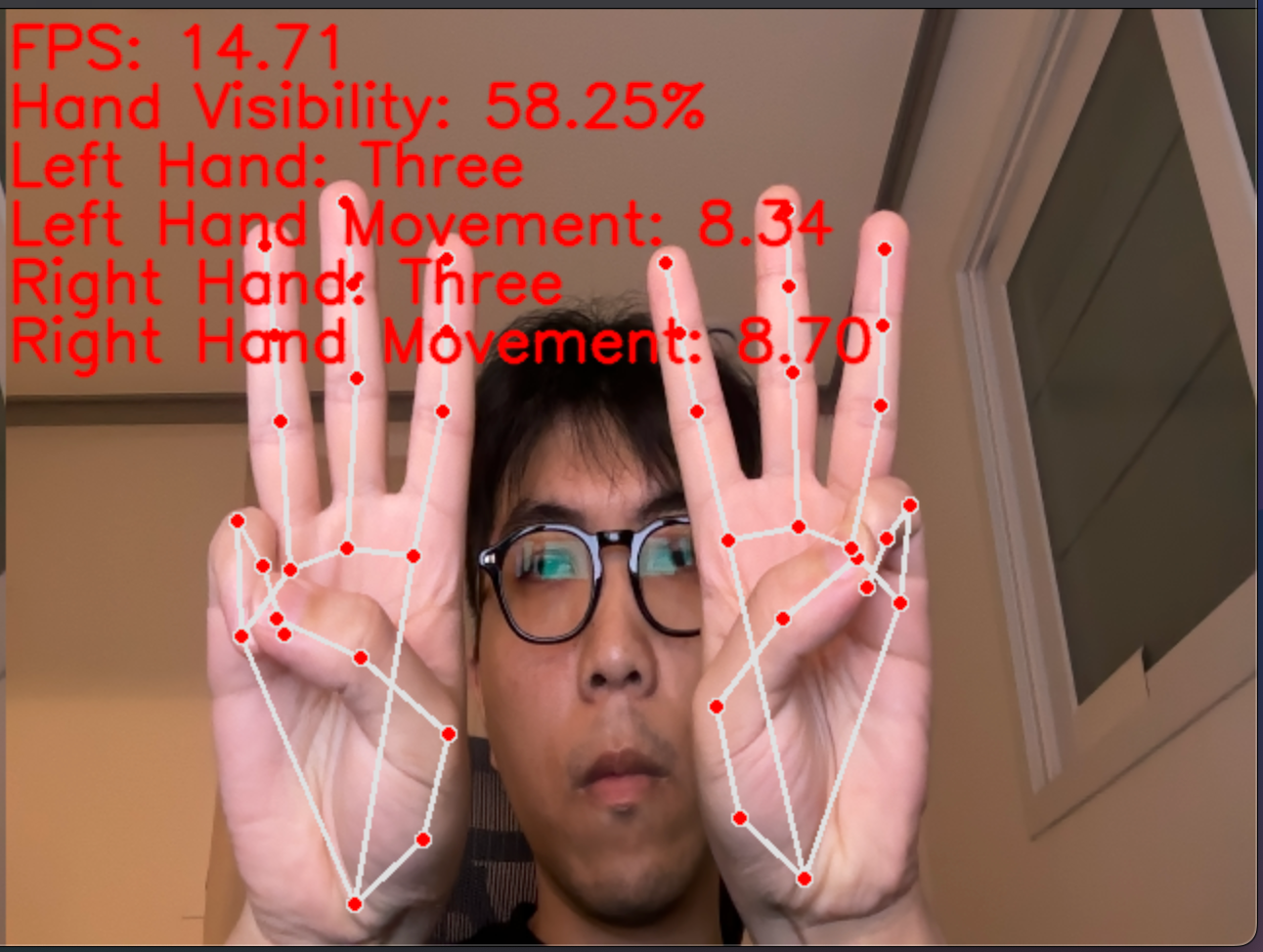

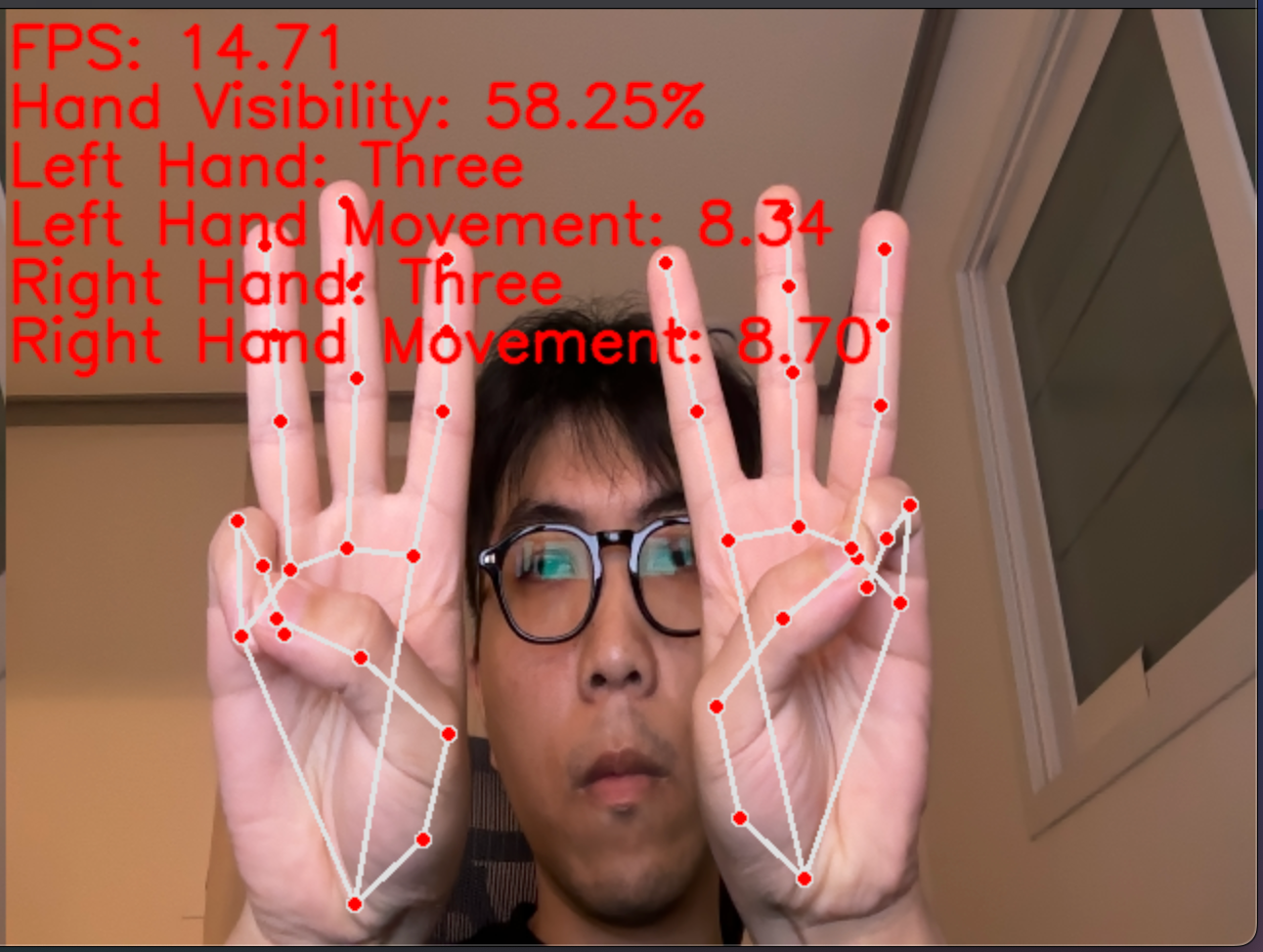

Presentation Attitude Assessment Demo

Automatically evaluates presentation attitudes by analyzing the presenter's gaze direction, hand gestures, and movements in real-time using MediaPipe-based face and hand recognition.

Gaze Direction Tracking

Hand Gesture Recognition

Key Achievements

- [MLOps] Deployed real-time inference system on Azure ML, achieving 30K MAU

- [LLM Agent] Generated synthetic student data using a multi-agent approach

- [Vision] Built an image generation pipeline based on diffusion models with quantization for model compression

- [Audio] Fine-tuned NVIDIA Parakeet-based STT model to improve speech recognition for non-native children

- [Audio] TTS model fine-tuning research (experimental stage)

- [Search] Designed and implemented a hybrid search system combining keyword-based and vector search

- [Vision] Automated student presentation attitude assessment using MediaPipe-based face recognition and pose tracking

Tech Stack

- Cloud: Azure ML, Blob Storage

- Frameworks: PyTorch, HuggingFace, LangChain, LangGraph

- Models: Diffusion, NVIDIA Parakeet, MediaPipe

- Serving: FastAPI, ONNX

- Search: Hybrid Search (BM25 + Vector)